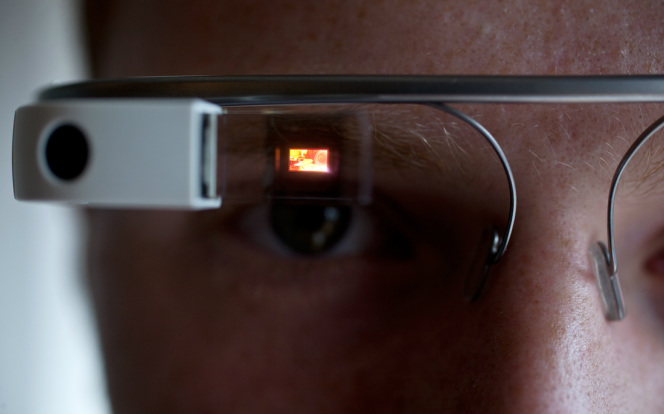

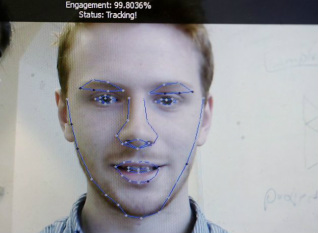

Most of us have heard of the wearable computer with an optical head-mounted display known Google Glass that is being developed by Google. The main function of this device is to be able to display information in a hands-free format using natural language voice commands. What can be more incredible than having the things you want to see and know appear in the airspace before your eye? Well, Catalin Voss of the company Sension, is developing an app for the Google Glass that can analyze facial features to discern emotions such as happiness, sadness, and anger.

Voss' development of this app was motivated by the prospect of being able to help the autistic find a way to learn to communicate and to socialize better with other people, motivation that was partially influenced by his autistic cousin. Now it has become a gateway to further development in regards to digital interaction, specifically those between humans and machine. It is believed that such technology will allow machines to understand humans more thoroughly and better than words and gestures. How will this affect new technology and how we deal with them? How will we use this technology among other human beings? If Prince Hamlet, from Hamlet, had this technology, wouldn't it have helped him gather more clues to discern the motives of the people around him? However, his intelligence and attention to detail seem to serve such a purpose already. Maybe the technology would be more befitting of his Uncle Claudius who would need it to figure out what is wrong with Prince Hamlet. Appearances can be deceiving though, which leads to the question of whether or not over reliance on a tool like this would make us more susceptible to deception or less able to think for ourselves.

RSS Feed

RSS Feed